Robotic Graffiti Tagger!

13 January 2010 / announcement, code, projectA labor-saving device for graffiti artists. An assistive tool or telematic proxy for taggers working in harsh environments. Long-needed relief for graffiti artists with RSI. Or simply, pure research into as-yet-untrammeled intersections of automation and architecture. We give you: the ROBOTAGGER, an industrial robot arm programmed with GML, the new “Graffiti Markup Language” created by Evan Roth and pals at the F.A.T. Lab:

(グラフィティの共通マークアップ言語GMLを書き出すロボットアーム「ROBOTAGGER」)

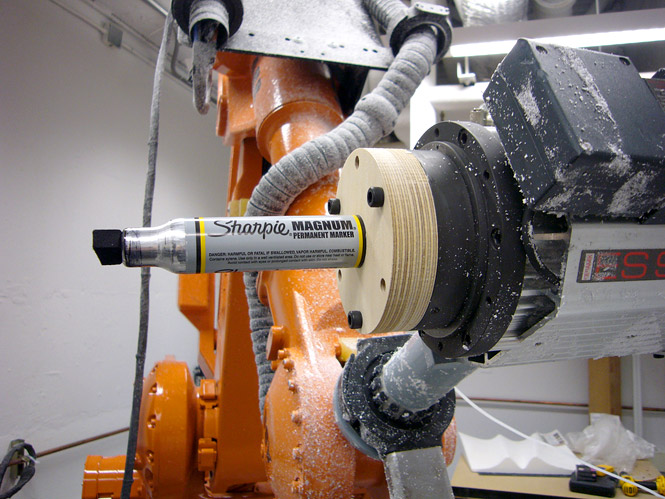

This quick project came together over the past weekend in CMU’s Digital Fabrication Laboratory (dFAB), directed by my friend and colleague, Professor Jeremy Ficca. Inspired by a tweet from Evan Roth, one of the co-creators of GML, we reckoned it would be easy to transcode GML into a file format suitable for robotic CAD/CAM machining. The result is a small Processing utility that converts GML into DXF and CSV (you can download the GML-to-DXF source code here). After tinkering around for a while we developed a pipeline for converting the GML/DXF strokes from 000000book.com into machining paths for the dFAB’s ABB IRB-4400, an eight foot tall industrial robot arm. Our first test was a “hello world” scrawl which, not coincidentally, was also one of the first GML files ever created (148.GML at 000000book.com). But our real objective, which you can see in the video above, was to give physical form to GML tags produced by TEMPT ONE (Tony Quan), a graffiti writer with Lou Gehrig’s disease who produced the digital GML recording with the FAT Lab’s well-known EyeWriter software. Although there’s been a lot of data loss and translation along the way, it’s not completely unreasonable to think of the Robotagger as a prosthesis for Tony. I hope we can pursue this possibility a little further.

Speaking of future directions, there are lots of interesting research topics latent here in automated calligraphy. We were astonished to realize just how important the force-feedback of pressure is to the visual quality of the drawings. (The first 20 seconds of the video shows what I mean in an extreme way – we shattered a marker and sent ink everywhere when our estimate of the Z-plane turned out to be off by a quarter-inch. Looks like we need to get that force-measuring software extension that ABB sells.) Going forward, we’re interested in exploring robotic performances of higher-dimensional gesture data, such as that produced by Wacom tablets, which provides high-resolution information about the pressure, azimuth and elevation (yaw and pitch) of the tagger’s stylus. Watch this space — I’ll be developing some tools to help the next version of GML encode this information.

The Robotagger Unmanned Graffiti System is a collaboration of Jeremy Ficca’s dFAB at CMU; the STUDIO for Creative Inquiry at Carnegie Mellon, which I direct; and the FAT Lab’s GML initiative. We used the Sharpie Magnum and the wonderful 2-inch Montana Hardcore markers, which (AFAIK) are the largest magic markers in commercial production. (And of course, for the deep history of prior work blending graffiti and automation, don’t forget to check out the spraycan-enabled Graffiti Writer robot [1998-2000] by the Institute for Applied Autonomy, and Jürg Lehni’s wall-spraying Hektor robot [2002].) [Extra links: RoboTagger on Youtube]

« Prev post: Contestational Cartographies Symposium, January 28-30 at CMU!

» Next post: Eric Singer Lecture/Performance at CMU, Wed. Feb. 17th